-

solutinos

-

Hire

Frontend Developer

Backend Developer

-

NodeJS Developer

-

Java Developer

-

Django Developer

-

Spring Boot Developer

-

Python Developer

-

Golang Developer

-

Ruby on Rails Developer

-

Laravel Developer

-

.NET Developer

Technology

-

Flutter Developer

-

React Native Developer

-

Xamarin Developer

-

Kotlin Developer

-

Cross-Platform Developer

-

Swift Developer

-

MongoDB Developer

-

C Developer

-

Smart Contract Developers

Cloud

-

-

Services

Mobile Development

Web Development

- Work

-

Multi Services App

-

Food Delivery App

-

Grocery Delivery App

-

Taxi Cab Booking App

-

Multi Services App

-

OTT Platform APP

-

Social Media APP

-

Freelance Service App

-

Car Rental App

-

Medicine Delivery App

-

Liquor Delivery App

-

Sports Betting App

-

Online Coupon App

-

eLearning App

-

Logistics & Transportation App

-

Courier Delivery App

-

On-Demand Real Estate App

-

E-Wallet APP

-

Online Dating App

-

Handyman Services App

-

-

Process

-

Company

Quick Summary : As AI blurs the line between what’s real and what’s machine-based, the concerns about ethics in AI are at the core of any AI ML development initiative. While machine learning models have transcended beyond their early versions to today’s mighty ML models that are capable of doing things way beyond human limitations, the need for their responsible usage and deployment is greater than ever. By understanding these concerns and implementing the best ethical AI practices, we can work toward developing responsible AI frameworks that augment human capabilities without risk.

AI has come a long way from being sci-fi stuff. Today, it's the bedrock of business decisions. It's everywhere- what we see, what we buy, what we chase, and even in our daily jobs. But such rewarding advancements don't come alone. They bring in its trail several questions around ethics. As things in AI move front and center, some pressing queries are here to linger.

How reliable are AI algorithms?

How can one possibly protect privacy in a world full of data-hungry models?

What happens to humans as a workforce as machines become smarter?

While any AI development company that strives for responsible artificial intelligence development has to find answers to the above question, honestly, there are no easy answers. From bias to transparency, privacy to job displacement, there's no better hour than now to discuss ethics in AI.

In this article, we explore the key ethical concerns in Artificial Intelligence, the response from industry regulators, and the challenges and solutions in this era of advanced machine learning.

Why Do Ethics in AI Matter?

AI-based warehouse robots imitate human actions. Neural networks that are key for AI ML development also imitate the human brain (neural networks are nodes, a type of artificial neuron that can pass information to another neuron). Neural networks imitate human brain mechanisms. Like the human brain picks data from the environment, these ML algorithms are also trained on real-life data. The point is that all AI tech that we currently have is a reflection of the human world. Ethics are the core of human existence. So, any tech based on human behavior must also possess ethics.

So, now the question is, what is ethical AI?

Can an AI credit-scoring algorithm that uses ZIP Codes to determine if a person born in that particular ZIP code is credit-worthy or not be termed as ethical? What type of bias is the AI reflecting here? Can you let insurance companies track our every movement to determine our fitness only to sell insurance policies? Can all our micro-payments on UPI apps be tracked to determine our loan capacities? Isn’t this privacy invasion in the name of personalized services?

The list of concerns related to ethics in AI and Machine learning is exhaustive. However, as all the AI/ML development tech is a reflection of human life, ethics are key to keep it going.

Let’s understand the key ethical challenges in AI in detail to reflect on this dilemma.

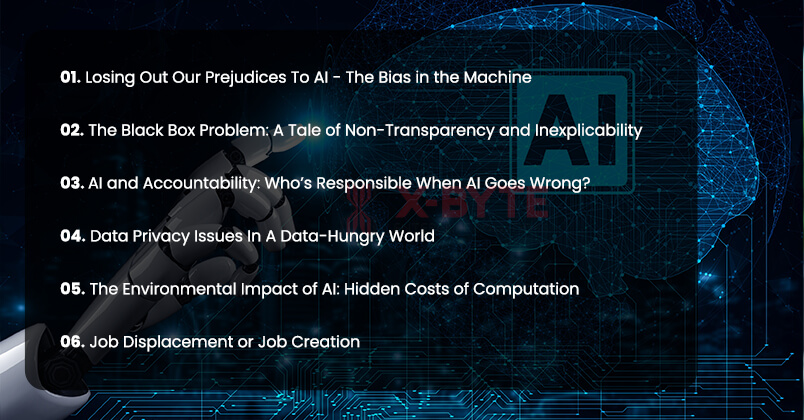

Top Ethical Concerns of AI in 2025 and their Solutions

Losing Out Our Prejudices To AI - The Bias in the Machine

Bias is seemingly the most talked-about entity in AI ethics. You see, AI systems, no matter the size, tend to learn from data rooted in societal or historical biases. The algorithms can amplify discrimination or perpetuate it.

Take facial recognition, for instance. It's the most troubling example where systems have been criticized for misidentifying people of color. On the other hand, we got hiring algorithms favoring male candidates for a particular position simply because the system was fed with male-dominated training data.

The math is simple- a biased input will always produce a biased output. And that, by all means, is a real-world problem. A 2023 survey shows bias and lack of transparency among the top concerns for organizations that work with AI models. Interestingly, 70% of these organizations contributing to the survey recognized the significance of having established guidelines for AI usage. Yet, only 6% had stringent policies in place.

Over the years, we've witnessed the not-so-good side of biased AI, from unexplained loan denials to criminal sentencing and even faulty healthcare recommendations. What matters most is that these scenarios aren't just hypothetical. They affect real human lives. Imagine an active AI screening at a job interview where genuine and deserving female candidates are being filtered out only because the hiring data is biased.

The big question is, how do we control AI bias?

The answer starts with having access to diverse, representative data, followed by strong audits and rigorous testing to detect unfair patterns. New York City has already introduced its first-of-its-kind law that calls for mandatory bias audits for any AI hiring tool. It's a bold move, hinting at a silently growing movement to hold AI algorithms accountable. Many leading AI companies have also pledged to prioritize reducing bias across their existing models.

Ensure your AI initiatives, software, and apps align with ethical standards!

The Black Box Problem: A Tale of Non-Transparency and Inexplicability

Multiple AI models operate as "black boxes," meaning the creators have no idea how these items arrive at a certain outcome. This severe lack of transparency is fast becoming an ethical concern.

Imagine an AI system denying an individual the right to apply for a mortgage and flagging it as a security risk. Won't you care to know why? That's why explainability is no longer a nice-to-have feature in sensitive apps. It's essential to build trust and credibility.

However, the real problem is challenging an AI-led outcome when both regulators and users alike fail to explain or understand how the model works. Who knows, such "black boxes" can hide multiple biased logic. For example, an AI model in a healthcare facility might recommend a particular treatment for a certain medical condition. However, if the doctors can't find the rationale behind it, they will be hesitant to go with it, leaving the patient's life in crisis.

The only foolproof way to address the transparency issues is by developing explainable AI (XAI) techniques, allowing humans to know what's under the hood. Feature importance scores, interpretable models, and written documentation of training AI models are highly recommended.

For new AI companies, the EU's AI Act could be an example to follow. It's the world's first comprehensive AI law that requires several AI systems across different domains to disclose when AI drives decisions. They are also expected to provide clear explanations for going ahead with the decisions.

AI and Accountability: Who’s Responsible When AI Goes Wrong?

As AI systems become more autonomous and make decisions that have real-world consequences, the question of accountability becomes increasingly important. When an AI system makes a mistake - whether it’s a self-driving car accident or a flawed medical diagnosis - who is held responsible?

This issue is complex and involves multiple stakeholders:

- AI developers and companies

- Users of AI systems

- Regulatory bodies

- The AI systems themselves (in cases of advanced AI)

Developing clear and responsible AI frameworks for AI accountability is crucial. This might involve:

- Creating AI liability laws

- Developing AI ethics consulting awareness

- Establishing industry standards for AI development and deployment

- Implementing robust testing and validation processes for AI systems

- Developing AI insurance models to manage risks

Data Privacy Issues In A Data-Hungry World

There's no denying that modern AI thrives on personal data, which raises questions about consent and privacy. AI models are constantly being trained on huge datasets–from social media posts to health records. So, who owns the data, and how is it being used?

No matter what, privacy should be the cornerstone of AI development to stop it from overstepping. One of the vivid examples in this case is Italy's temporary ban on using ChatGPT in 2023 following allegations of illegally collecting personal data. They became the first Western country to take a strong stand against generative AI, thereby underscoring the seriousness of privacy.

The ban also exposed users to multiple information and conversations recorded by ChatGPT, highlighting the dangers of large-scale data collection. As AI systems become advanced and memorize every single detail that humans feed them, it would only lead to misuse or exposure of sensitive information.

So, how can we protect privacy?

The answer is data governance, which includes anonymizing data, getting user consent for personal information, and staying compliant with regulations like GDPR. Forward-thinking companies have also embraced privacy by design while shaping AI systems with more robust privacy considerations. These are ground-up measures and not an afterthought. For instance, AI healthcare apps use on-device processing for sensitive data, meaning personal data never leaves a user's phone.

Global regulations are also catching up. Ethical AI firms are setting the bar high on privacy to build customer trust. A 2023 poll showed that over 80% of Americans favor clear and transparent AI regulations to protect their privacy. After all, respecting privacy shouldn't be optional if we want society to reap the benefits of Artificial Intelligence. By all means, it's a non-negotiable element of responsible AI development.

The Environmental Impact of AI: Hidden Costs of Computation

While AI has the potential to help solve environmental challenges, it also has a significant environmental footprint. Training large AI models like GPTs requires enormous amounts of computational power, which translates to high energy consumption and carbon emissions. An MIT study found that power requirements from running data centers in North America alone have increased from 2688 Megawatts to 5341 between 2022 and 2023.

Addressing this challenge requires:

- Developing more energy-efficient AI algorithms and hardware

- Using renewable energy sources for AI computation

- Considering the environmental impact in AI design and deployment decisions

- Encouraging research into “Green AI” that prioritizes environmental efficiency alongside performance

By addressing these additional challenges, we can work towards a future where AI is not only powerful and innovative but also ethical, accountable, and sustainable.

Achieve full compliance with AI regulatory, legal, and ethical standards!

Partner with our AI development company for responsible AI solutions.

Book a free consultation call now! Book a free consultation call now!Job Displacement or Job Creation

“Will AI take my job?” That was the big headline when AI models made a strong foray a few years back. In the age of advanced machine learning, the question is more valid than ever. Gen AI can draft emails on your behalf, write programming codes, diagnose a medical condition, fly drones, drive cars, and whatnot.

The chief concern here is job displacement, which means there’s a strong possibility that AI can cause specific job roles to go extinct and leave human workers in the lurch. Industry stats have a mixed story to tell. A 2023 analysis by Goldman Sachs showed almost 300 million full-time jobs could be affected by AI automation, which is staggering. Any job role with repetitive or routine tasks is more at risk compared to legal and creative work.

The tension is real, considering what Gen AI can do. The early instances where AI chatbots handled customer queries with ease were delightfully scary. In time, as these technologies evolve, the scope of human tasks will cease to exist. However, such instances of job displacement are only half of the story.

History shows how technology has created more jobs than it has taken away. AI might have already replaced certain tasks, but it also helped otherwise. For instance, a human analyst can now spend more time creating strategies and interpreting data than juggling different paperwork. Besides, new categories of AI-infused jobs are fast emerging.

Positions like AI ethics specialists, AI maintenance engineers, and data curators are in high demand.

A McKinsey survey has also shown that a plurality of companies expect minimal change in the size of their human workforce due to AI. They plan on reskilling or upskilling their employees to create new job roles instead of laying them off. This is indeed inspiring.

The Way Forward For Ethical AI Development

Ethics in AI is all about foresight, taking into account the implications of modern technology and driving it toward positive outcomes. But at the same time, it's also about ensuring how it serves humanity and never the other way around. For forward-thinking businesses, ethics in AI is also about sustainability.

In some cases, concerns with AI are not even applicable. Deloitte AI Institute’s executive director, Beena Ammanth, has an interesting take on this. She explains a scenario where AI is more focused on accuracy rather than prediction.

For instance, if AI is used to predict the possibility of failure of a jet engine, we are focused on accuracy rather than the bias of the algorithm. However, how do AI concerns arise here? If aviation companies that are deploying AI models to predict jet engine failures find a pattern in cases where the pilots were applying more pressure to the thrust (hitting the thrust a bit harder than guidelines). Now, if this data is used for creating specific guidelines where pilots are made sensitive towards the top reasons for jet failures (mentioning applying hard pressure on thrust as one of the reasons), then you are using AI ethically. However, if this data is used for pilot performance review, it will raise privacy concerns and a debatable scenario of ethics in AI.

Developing Responsible AI Frameworks

The right organizational culture, the right AI governance, and processing through the responsible AI engineering frameworks are key to responsible AI. Ethical AI practices need to be followed by enterprises to make sure their AI ML solutions do not cross any ethical boundaries. Also, organizations that provide machine learning as a service need to understand that AI is meant to augment the primarily available human intelligence and not supersede or replace it.

Second, they need to see data as a third-party belonging. For instance, if an AI development company is creating a model that trains on a client’s customers’ data, the data is theirs, not of the AI development companies'. Also, there must be transparency. The client must know what type of data is being used, who is using it, and what the implications (ethical or legal) are of using that data.

In the end, ethical and responsible AI frameworks also take into account how all the development is going to affect the end user. For instance, if the AI-based algorithm is providing personal shopping recommendations to a user, how it affects the user is a key ethical AI concern.

So what is a responsible AI framework? AI development framework that imbibes the below key AI ethics best practices:

- An organizational culture that prioritizes ethical AI development

- Robust AI governance structures and policies

- Ethical AI engineering frameworks throughout development

- Ensuring AI augments human intelligence rather than replacing it

- Treating data as third-party property, especially when working with client data

- Maintaining transparency in data usage, processing, and implications

- Consider the ethical and legal impacts of AI on end-users

- Regularly assess and mitigate potential biases in AI algorithms and outputs

Wrapping Up

With fast-changing regulations, public scrutiny is bound to intensify. That means ethical lapses can be downright expensive. So, the smart option now is responsible AI development. That's precisely what we do at X-Byte Solutions.

As a full-scale AI ML development service company, we are committed to the guiding principles and sustainable practices of responsible AI. From rigorously testing for AI bias to being transparent about algorithms, we are bent on safeguarding sensitive user data.

In essence, we keep humans in control of every technology we build. Our AI development models have functional and ethical checkpoints integrated in every step so that we don't slow down in progress. Instead, we ensure that every bit of progress truly benefits our clients and, in turn, the wider community.